Why Educators Must Become AI Literate, And How to Start

Guest article by Priten Soundar-Shah

This guest article is presented on National AI Literacy Day, March 27, 2026, a nationwide day of action focused on exploring the questions “What is AI?” and “How to we prepare learners for an AI-enabled world?” This day brings together educators, students, families, and community members to discuss the ways this technology is shaping our world.

As we finish the third full school year since the release of ChatGPT, most teachers have now had some level of exposure to Generative AI tools, but we haven’t come to a consensus on the definition of AI literacy for educators. This is a prerequisite if we wish to expand AI literacy and equip teachers to develop students’ AI literacy accordingly. .

Much of our focus these few years has been spent helping students learn how to use AI responsibly, especially to combat cheating and plagiarism, and also with consideration given to productive learning, critical thinking, and online safety. But we are still behind on building fundamental literacy for teachers. Recent data supports this literacy gap. For example, Microsoft Education found that 80% of teachers say they are using AI, but 60% have received no or little training. We cannot continue to expect teachers to build student AI literacy without defining what success looks like for educator literacy, and there we’re falling short.

In some instances, AI literacy in the classroom is being defined as the ability to use chat tools to produce some sort of outcome. By that standard, we’re doing much better than we were three years ago. Students and teachers are increasingly turning to AI tools to produce study aids, outlines, drafts, and other content. And, some schools do provide training that is often concentrated on a particular vendor’s tool and how to use it effectively in the classroom.

However, we are leaving out the training that is necessary to help educators learn how to decide when to use or not use the technology and what the implications of that are. For example, I’ve spoken to teachers who have access to a variety of AI tools, have received training on how to use them, but still don’t incorporate them into their workflow, because they don’t know if it's “right.”

Shaping AI Strategy for Your School

In conversations with school leaders across the country, I hear a common refrain: families are asking questions about AI, and schools without answers are losing credibility. When a parent asks 'How are you teaching my kid about AI?' the answer can't just rely on AI detectors. We need teachers who can speak comprehensively about AI in the classroom to students and parents. And so, our goal needs to move beyond learning how to use it to building the fluency to explain the thinking behind how and why it’s used. What should a school ask itself to make sure it’s preparing educators?

Purpose: Schools need to ask themselves why they want to increase training on AI. What are the exact pedagogical goals? Is it centered on fears, market pressures, or an opportunity to serve their students better? Modeling this type of thought process, and including it in messaging to educators, helps reaffirm that all decisions on technology should be made from a student-centered perspective.

Capacity: Schools need to evaluate what their training is providing for their educators. Is professional development helping them build general capacity? Is it transferable if the exact tool or company changes? Oftentimes, technology training is hyperpersonalized to the exact platform, but good AI PD needs to address the larger set of questions that teachers are asking. They need to be able to answer questions like “Should I replace this group activity with a scaffolded game on our AI tool?”

Resources: Educators need to have a full understanding of what resources are available to them and their students. Do the resources to support platform subscriptions, further training, bandwidth needs, and devices exist? If training is provided without the right infrastructure, it ends up being inactionable. There also needs to be consideration of whether the resources not only exist, but that enough resources exist to ensure that everyone benefits from any positive intervention, and not just a select few.

Safety: Schools need policies and procedures that guide educators on using the tools in ways that protect student privacy. Are there clear data privacy policies, and can our teachers explain them to families? Teachers need to feel empowered both in their own understanding of the risk mitigation strategies the school is adopting and in their ability to face parent criticism.

With these four factors in mind, schools can ensure that any training or professional development they are providing to build AI literacy is comprehensive and impactful.

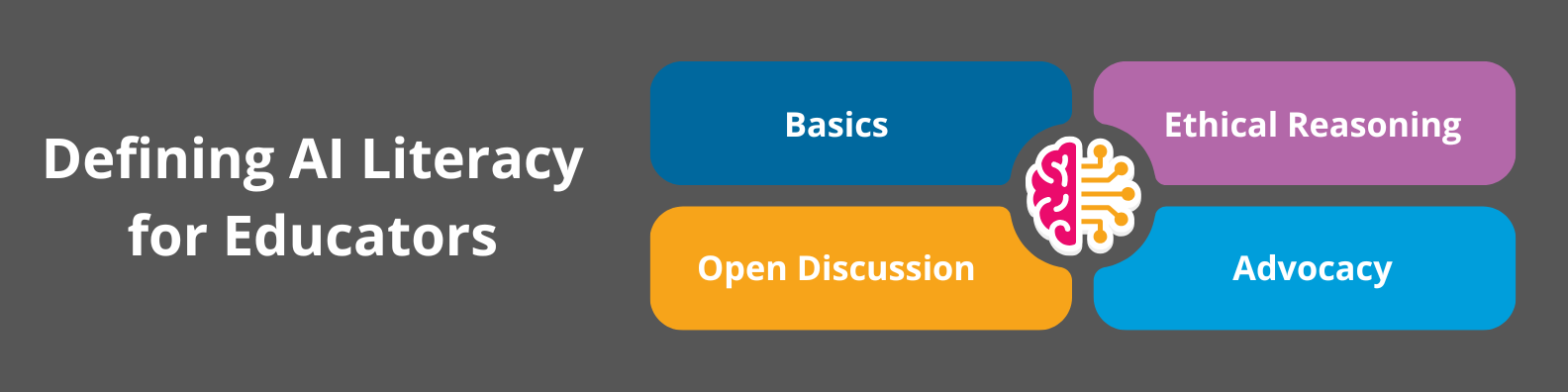

Defining AI Literacy for Educators

But, what are the exact concepts and skills that educators need to learn about AI?

Basics: Educators will need to gain fluency in the basic technology behind the tools. They need to learn not just how one particular tool is set up, but how AI tools are built, what their capabilities are, what mistakes they still make, and where educators and their students will encounter it.

Ethical Reasoning: Educators need the vocabulary and skills to communicate about how they are deciding to use the technology to themselves, their students, and the parents. Just building technical capacity, without feeling well-grounded in ethical reasoning, leaves educators feeling underprepared, scared, or overwhelmed at the prospect of discussing the technology with students, and even more so using the technology themselves.

Open Discussions: Pedagogical innovation happens best in a community setting, where educators can freely share ideas and concerns, build fluency together, and feel comfortable in their decisions with the support of their peers. They also need to hold these discussions with students directly, so that they can better understand how students are using and thinking about the technology and to model the critical thinking behind making responsible decisions about AI usage.

Advocacy: Educators should be the center of conversations about how decisions are made on AI, including policies, procurement, and procedures. They should be trained on the risks and benefits of adopting certain tools, the long-term implications of technology decisions in schools, and structured frameworks for evaluating products and services. This would allow them to meaningfully guide school decision-making by combining their own classroom experience with knowledge about the technology.

By ensuring that we build literacy along all those angles, we will prepare our teachers not just for the present state of the technology, but for whatever future developments we encounter.

Conclusion

The future of AI in education shouldn’t be shaped by technology. It should be shaped by educators who choose to engage with it thoughtfully. Before we can think about preparing our students to use this technology, we have to empower our educators to learn and use the technology in ways that build their capacity to make effective decisions. By providing educators with technical knowledge, ethical reasoning skills, and policy guardrails, we can ensure that our schools are prepared to face whatever new challenges the rapid development of artificial intelligence continues to bring.

About the author

Priten Soundar-Shah is an educator, philosopher, and author of Ethical Ed Tech: How Educators Can Lead on AI and Digital Safety in K-12 (Jossey-Bass/Wiley, 2026) and AI and the Future of Education (Wiley, 2023). He is Executive Director of Pedagogy Futures, and teaches courses on the Ethics of Ed Tech at College Unbound. He holds a B.A. in Philosophy and an M.Ed. from Harvard. Connect with Priten on LinkedIn.